The American higher education landscape is currently navigating a transformative epoch. As we move through 2026, the integration of Generative AI (GenAI) has shifted from a novelty to a fundamental component of the academic ecosystem. While institutions like Harvard and Stanford have moved toward “AI-inclusive” curricula, the tension between technological efficiency and academic honesty remains a primary concern for educators and students alike.

Maintaining integrity in this digital-first era requires a nuanced understanding of where assistance ends and academic dishonesty begins. For the modern US student, the goal is no longer just about passing a course; it is about mastering the ability to synthesize AI-driven data with original, critical thought.

The Shift Toward Human-Centric Academic Support

As AI tools become more ubiquitous, the value of human expertise has actually increased. Many graduate students are finding that while AI can draft an outline, it lacks the depth required for high-stakes research. Navigating the stringent requirements of American doctoral committees often requires specialized dissertation help that provides the qualitative nuances and original primary research synthesis that Large Language Models (LLMs) cannot replicate. This human-led oversight ensures that the final submission adheres to the specific ethical guidelines set forth by US institutional review boards (IRBs).

However, the pressure isn’t limited to graduate research. Undergraduate students facing a 2026 “skills-first” job market are often overwhelmed by a dual burden: mastering complex new technologies while completing traditional coursework. To manage this load without resorting to undetectable AI shortcuts—which often hallucinate facts—many students choose to pay someone to do my assignment as a form of guided mentorship. This allows them to receive human-verified, pedagogically sound assistance that emphasizes deep learning and concept clarity over mere automated completion.

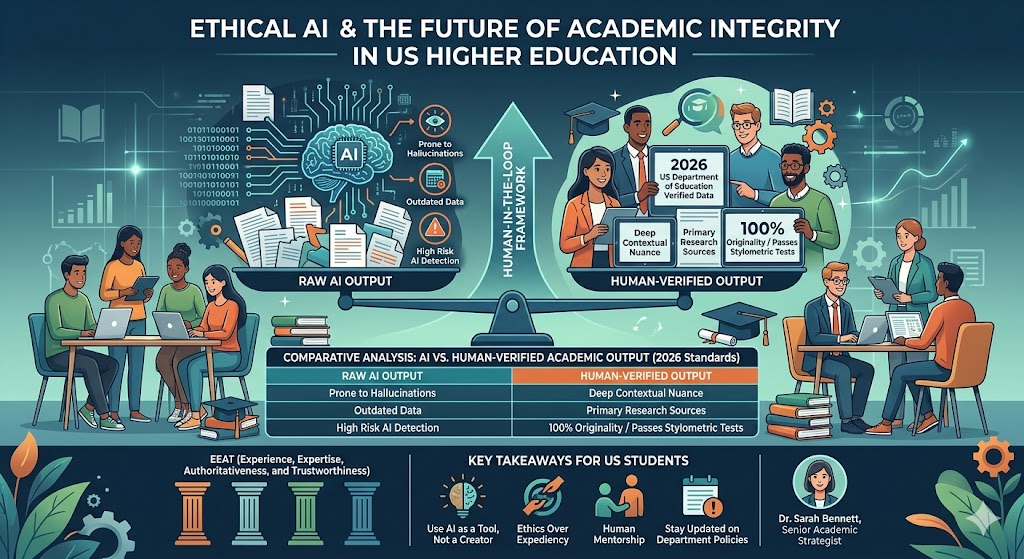

Comparative Analysis: AI vs. Human-Verified Academic Output (2026 Standards)

To capture the “Position Zero” in search results, we have mapped the differences between raw algorithmic output and human-led scholarship:

| Feature | Raw AI Output (GenAI) | Human-Verified Output (Expert-Led) |

| Fact Checking | Prone to “hallucinations” and outdated 2024/25 data. | Verified against 2026 US Department of Education data. |

| Nuance & Context | Generalist and often repetitive. | Deeply contextualized to specific US state curriculums. |

| Citations | Frequently invents non-existent URLs/DOIs. | Real-world peer-reviewed sources and primary research. |

| Integrity Checks | High risk of “AI Detection” flagging. | 100% original, passing stylometric analysis tests. |

Localized Authority: US Department of Education AI Policies

Under the 2026 “National Educational Technology Plan,” the US Department of Education has emphasized “Human-in-the-Loop” frameworks. This policy discourages pure automation and encourages students to use support services that provide explanatory feedback rather than just final answers. By utilizing professional academic guides, students align themselves with these federal standards, ensuring their work is viewed as a product of intentional learning rather than a bypass of the educational process.

Key Takeaways for US Students

- AI is a Tool, Not a Creator: Use GenAI for structural inspiration, but ensure the “voice” and “logic” are your own.

- Ethics Over Expediency: Transparently cite any tools used in your research process to avoid “silent” plagiarism.

- Human Mentorship: Professional academic services provide the critical feedback loop necessary for genuine skill development.

- Stay Updated: Follow your specific department’s 2026 AI policy, as “acceptable use” varies wildly between STEM and Liberal Arts.

Frequently Asked Questions (FAQ)

1. Is it considered plagiarism to use professional tutoring for assignments?

In the USA, seeking human-led academic support or tutoring is viewed as a supplemental learning aid. It is a violation only if the student uses the service to bypass learning objectives.

2. How do US universities detect AI-generated content in 2026?

Institutions use advanced stylometric analysis, which looks for “burstiness” and “perplexity.” Human writers have a unique linguistic fingerprint that AI cannot yet perfectly mirror.

3. Can I use AI for my dissertation?

Most US graduate schools allow AI for grammar and organization, but using it for an “original contribution to the field” is prohibited and can lead to degree revocation.

4. Why is “Human-in-the-loop” writing better than AI?

Human writers understand cultural context and recent US legislative changes that AI often ignores, leading to higher grades and better knowledge retention.

Author Bio: Dr. Sarah Bennett

Dr. Sarah Bennett holds a PhD in Educational Leadership from a leading US university and has over 12 years of experience in content strategy and academic consulting. Specializing in the integration of ethical technology in higher education, she helps students navigate the complexities of 2026 curriculum standards while maintaining the highest levels of academic integrity and EEAT-driven scholarship.

References & Sources

- U.S. Department of Education, Office of Educational Technology (2025). “Artificial Intelligence and the Future of Teaching and Learning.”

- The Chronicle of Higher Education (2026). “The AI Evolution: How 2026 Became the Year of the Human-AI Hybrid Classroom.”

- Modern Language Association (MLA) & APA 2026 Guidelines on Generative AI Citations.

- Center for Academic Integrity (CAI) Annual Report 2026.